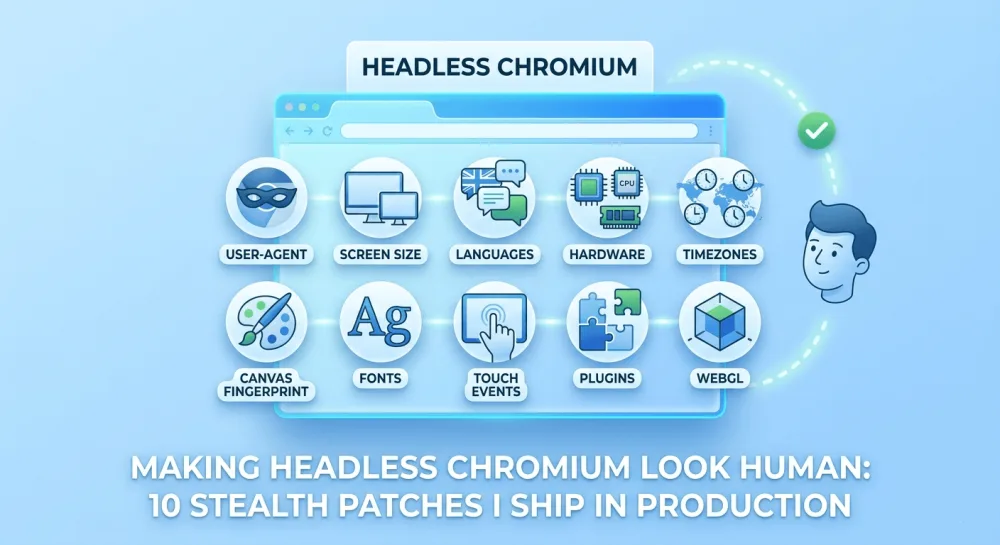

Making headless Chromium look human: 10 stealth patches I ship in production

About a third of the sites I tried to screenshot returned a Cloudflare challenge page. Here are the ten stealth patches I now ship inside screenshotrun to make headless Chromium look like a regular browser, with the actual code and honest notes on what still doesn't work.

A few months ago I was helping a friend build a competitor-tracking tool that needed to screenshot pricing pages from a handful of SaaS companies. About a third of the targets returned a Cloudflare "Checking your browser" page. Two of them returned a completely different layout, clearly an "anti-scraper" version that swapped real prices for placeholder text. One served a flat 403.

The frustrating part: my Playwright script was using headless Chromium. Same engine, same rendering, same JavaScript as the desktop browser. But the sites could tell.

I spent the next two weekends reverse-engineering what bot-detection scripts actually look at. There's no single magic flag. There are about a dozen tiny fingerprints that headless Chromium leaks by default, and any one of them on its own is enough to fail a check. Block them all and most sites stop noticing.

What follows is the exact set of patches I now ship inside screenshotrun under a stealth: true flag. Each one targets a real fingerprint that real detection scripts read, and I'll explain what it does, why I added it, and where it still falls short.

A quick disclaimer before the code: this is for taking screenshots of pages you have a legitimate reason to view. None of these patches defeat serious bot protection like Kasada or PerimeterX, and that's intentional. If your use case is automated abuse, this article isn't going to help you.

How I attach the patches

All ten patches live in a single function I pass to page.addInitScript(). That hook runs before any of the page's own JavaScript, and timing matters here. If you patch navigator.webdriver after the page loads, half the detection scripts have already fingerprinted you and stored the verdict.

async function setupStealth(page) {

await page.addInitScript(getStealthScripts());

}I also launch Chromium with one extra flag that I'll come back to at the end. With that out of the way, let me walk through each patch.

Patch 1: remove the navigator.webdriver flag

The most famous one and the cheapest to fix. Stock headless Chromium sets navigator.webdriver === true. Every bot-detection library on the planet checks this property first.

Object.defineProperty(navigator, 'webdriver', { get: () => false });I overwrite the getter so it always returns false. Even when stealth mode is off, I still apply this single patch by default in screenshotrun, because the cost is zero and skipping it makes you instantly detectable.

The reason I use Object.defineProperty instead of navigator.webdriver = false is that the property is defined as non-writable on the prototype chain. Plain assignment silently fails. I learned that one the hard way.

Patch 2: give navigator.plugins a non-empty list

Headless Chromium reports navigator.plugins.length === 0. Real Chrome on a real desktop has at least the PDF viewer and Native Client. Detection scripts use this directly:

Object.defineProperty(navigator, 'plugins', {

get: () => {

const plugins = [

{ name: 'Chrome PDF Plugin', filename: 'internal-pdf-viewer', description: 'Portable Document Format' },

{ name: 'Chrome PDF Viewer', filename: 'mhjfbmdgcfjbbpaeojofohoefgiehjai', description: '' },

{ name: 'Native Client', filename: 'internal-nacl-plugin', description: '' },

];

plugins.length = 3;

return plugins;

},

});The hash-looking filename for the PDF Viewer (mhjfbmdgcfjbbpaeojofohoefgiehjai) is the actual Chrome extension ID for that plugin. Some detection scripts compare against the real value, so I use the genuine one instead of inventing something.

This isn't a perfect mimic. A deep enough fingerprinter can call navigator.plugins[0].length (yes, plugins have a length property of their own), which my mock doesn't implement properly. But it passes every "is there at least one plugin" check I've encountered in the wild.

Patch 3: set realistic navigator.languages

Headless Chromium often returns an empty array or just ['en-US']. Real browsers almost always have a fallback language too:

Object.defineProperty(navigator, 'languages', { get: () => ['en-US', 'en'] });I picked ['en-US', 'en'] because it's the most common combination by a wide margin and matches the user agent I default to. If you're trying to screenshot a localized version of a site, this is the place to vary it. Inside screenshotrun I tie this value to the requested locale rather than hard-coding English everywhere.

Patch 4: shim window.chrome

This one is sneaky. Real desktop Chrome exposes a global window.chrome object with a runtime API. Headless Chromium does not. Some detection scripts test for typeof window.chrome === 'undefined' as a quick discriminator.

if (!window.chrome) {

window.chrome = {

runtime: {

connect: () => {},

sendMessage: () => {},

onMessage: { addListener: () => {} },

},

loadTimes: () => ({}),

csi: () => ({}),

};

}The methods are no-ops, but they exist. The presence is what matters for the basic check.

I want to flag a limitation here. A sufficiently paranoid script can check whether window.chrome.runtime.connect is the real native function or a JavaScript stub by inspecting Function.prototype.toString.call(...). My version fails that check. I haven't found a public site that bothers to test that deeply, but it's possible.

Patch 5: fix the permissions API "Notification" loophole

This is my favorite patch in the list because it's so unintuitive. There's a specific test that bot-detection scripts love:

Notification.permission === 'denied' &&

(await navigator.permissions.query({name: 'notifications'})).state === 'prompt'Real browsers either both say denied or both say prompt. They're internally consistent. Headless Chromium leaks here: Notification.permission returns 'denied', but the permissions API returns 'prompt'. The mismatch is a dead giveaway, and there are blog posts dedicated to using exactly this trick.

I patch the permissions query to mirror Notification.permission:

const originalQuery = window.navigator.permissions?.query;

if (originalQuery) {

window.navigator.permissions.query = (parameters) => {

if (parameters.name === 'notifications') {

return Promise.resolve({ state: Notification.permission });

}

return originalQuery(parameters);

};

}Notice that I only intercept the notifications query. Everything else falls through to the original implementation. That way I don't break sites that legitimately need geolocation or camera permission checks during normal operation.

Patch 6: spoof the WebGL vendor and renderer

This is the patch that took me longest to figure out, and it's probably the highest-value one in the entire stack. Detection scripts use a tiny WebGL call to read your GPU info, and headless Chromium reports something like Google Inc. / ANGLE (some software renderer). The string Google Inc. as a vendor immediately flags you.

const getParameterOrig = WebGLRenderingContext.prototype.getParameter;

WebGLRenderingContext.prototype.getParameter = function(parameter) {

if (parameter === 37445) return 'Intel Inc.';

if (parameter === 37446) return 'Intel Iris OpenGL Engine';

return getParameterOrig.call(this, parameter);

};Those magic numbers (37445 and 37446) are UNMASKED_VENDOR_WEBGL and UNMASKED_RENDERER_WEBGL. I picked Intel Iris because it's one of the most common GPU/renderer combos on real consumer hardware, so it blends into the background.

If you want a stronger fingerprint, you can rotate the renderer string per request to imitate different machines. I haven't bothered, because a single common identity is enough for the checks I care about.

Patch 7: restore HTMLImageElement dimensions

Headless Chromium has a quirk where, in some configurations, image elements report width: 0, height: 0 for images that haven't loaded yet, even when a real browser would compute them from the attributes. A few detection scripts use this as a heuristic.

['height', 'width'].forEach(prop => {

const imageDescriptor = Object.getOwnPropertyDescriptor(HTMLImageElement.prototype, prop);

if (imageDescriptor) {

Object.defineProperty(HTMLImageElement.prototype, prop, {

...imageDescriptor,

});

}

});To be honest, this patch is the weakest of the ten. It just re-installs the existing descriptor, which fixes a specific edge case I ran into once on a single site and have never been able to reproduce cleanly since. I leave it in because the cost is zero and removing it doesn't help anything. If you're auditing this list and want to drop something, this is the one I'd drop first.

Patches 8 and 9: hardwareConcurrency and deviceMemory

These two go together. Detection scripts read navigator.hardwareConcurrency (CPU cores reported to the page) and navigator.deviceMemory (gigabytes of RAM reported to the page). Headless Chromium running inside a small container often reports values that don't match a typical user machine -- sometimes 1 core, sometimes oddly small memory values.

Object.defineProperty(navigator, 'hardwareConcurrency', { get: () => 8 });

Object.defineProperty(navigator, 'deviceMemory', { get: () => 8 });I picked 8 for both because it's the most common configuration on a modern laptop. For mobile-like emulation, drop these to 4. Forget to patch them at all, and a container reporting 1 core makes you stand out instantly in any fingerprint comparison.

Patch 10: stabilize attachShadow

The last patch is the most superstitious one in the list, and I'll admit it upfront. Some early Puppeteer-detection libraries hooked into Element.prototype.attachShadow to detect tampering. The fix re-defines it as a passthrough so the function reference looks consistent to anything inspecting the prototype:

try {

const originalAttachShadow = Element.prototype.attachShadow;

Element.prototype.attachShadow = function() {

return originalAttachShadow.call(this, ...arguments);

};

} catch (e) {}I added this after seeing it in an old Puppeteer-extra plugin, and removing it didn't cause any of my test sites to flag me. It's wrapped in a try/catch because some browser versions made attachShadow non-writable. Like patch 7, this one is on borrowed time in my codebase.

The browser-launch flag I almost forgot

All ten patches above run inside the page. There's one detection vector you can't fix from JavaScript at all, and that's the --enable-automation flag that Chromium passes to itself by default when launched via the DevTools protocol. Detection scripts can read this through navigator state and timing weirdness.

The fix is a launch argument:

defaultBrowser = await chromium.launch({

headless: true,

args: [

'--no-sandbox',

'--disable-setuid-sandbox',

'--disable-dev-shm-usage',

'--disable-gpu',

'--disable-blink-features=AutomationControlled',

],

});The relevant argument is --disable-blink-features=AutomationControlled. Without it, no amount of in-page patching will save you, because the AutomationControlled Blink feature is what exposes navigator.webdriver === true in the first place. With it, the property doesn't even exist by default, and patch 1 above becomes a redundant safety net (which I keep, because belt and suspenders is my brand).

What this stack actually catches (and what it doesn't)

After running this set of patches against a few hundred sites, here's a rough sense of where it lands.

It reliably bypasses Cloudflare's basic JavaScript challenges, the kind that don't escalate to a managed challenge or a CAPTCHA. It bypasses the casual if (navigator.webdriver) checks that most homemade detection uses. And it passes the canonical "are you a bot?" test sites like bot.sannysoft.com, which is what I run as a regression test whenever I touch any of this code.

It does not bypass:

Cloudflare Turnstile or any managed challenge that requires real interaction

DataDome, PerimeterX, Kasada and the other commercial anti-bot services (they read behavioral signals my patches don't fake)

Any site that fingerprints via canvas hashes, audio context, or font enumeration (a much bigger patch set is needed for those)

Sites that compare TLS fingerprints (JA3 / JA4) -- that's a network-level fix, not a JavaScript one

If you want a deeper take on when to build all this yourself versus paying someone to maintain it, I wrote about that tradeoff in my post on screenshot APIs vs. Puppeteer/Playwright.

How I expose this in the API

Inside screenshotrun, all ten patches plus the launch flag sit behind a single stealth: true parameter on the API:

curl -X POST https://api.screenshotrun.com/v1/screenshots \

-H "Authorization: Bearer YOUR_API_KEY" \

-d '{

"url": "https://example.com",

"stealth": true

}'I keep stealth opt-in instead of on-by-default for two reasons. First, the patches add a small amount of init-script overhead per page (somewhere in the 5 to 10ms range), and most legitimate screenshots don't need them. Second, some sites legitimately want to know they're being viewed by a headless browser and serve a lightweight fallback layout that's actually nicer to screenshot. Patching navigator.webdriver on those sites makes the result worse, not better.

But stealth on its own is only half the job. Even after the site stops noticing your headless browser, there's still a pile of other problems: cookie banners and chat widgets covering half the screen, lazy-loaded images that won't render without scrolling, and pages behind a login that need cookies and auth headers passed into Playwright. I've covered each of these separately, and they all stack with what's described here.

I hope this saves you the weekend I spent stepping through bot.sannysoft.com test by test. If you find a fingerprint check I missed, send it over and I'll add it to the list.

Vitalii Holben

Vitalii Holben