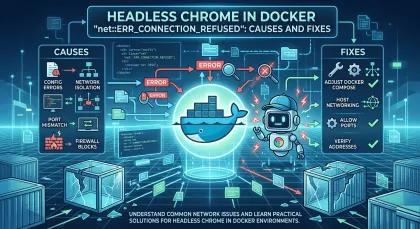

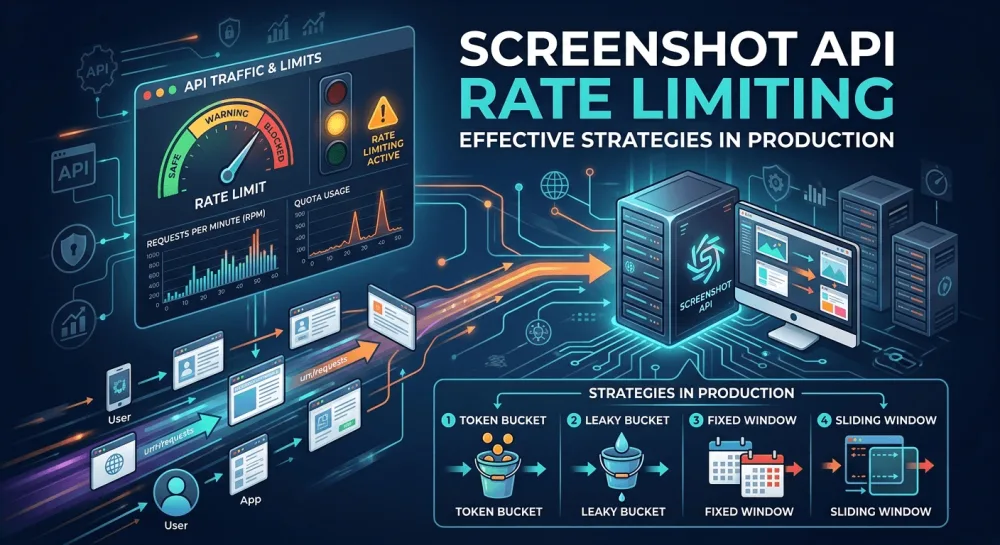

Screenshot API rate limiting strategies in production

Most rate limiting guides only cover retry strategies. That's only half the problem. Five concrete strategies — proactive (token bucket, queue) and reactive (Retry-After, exponential backoff, circuit breaker) — with Node.js code.

Most rate limiting guides come down to one piece of advice: use exponential backoff. That solves half the problem — reacting to limit violations. The other half, which almost no one mentions, is preventing violations in the first place. And those two problems are solved by completely different code.

In production, you don't get to pick one. Without the proactive side, your client constantly bumps into limits, burning API credits on requests that are doomed to 429 before they're even sent. Without the reactive side, a single transient API hiccup brings down your entire worker. Below are concrete approaches, split into those two classes, with real Node.js code.

Two sides of the same problem

Proactive strategies work before the request goes out. They control how many requests the client sends in the first place and don't let the client exceed a known limit. Token buckets and client-side queues belong here.

Reactive strategies work after the API returns a refusal. They properly handle 429 Too Many Requests, 503 Service Unavailable, and network errors, so that the client doesn't lose requests and doesn't pile more load onto the API. Retry-After handling, exponential backoff with jitter, and circuit breaker patterns live in this group.

A real production client uses both sides. Proactive prevents 90% of the cases, reactive handles the remaining 10% that inevitably happen due to clock drift, transient API spikes, and distributed workers that don't know about each other.

Token bucket: pacing on the client side

The simplest proactive approach. You have a "bucket" of tokens, a new token is added at a known rate (one per second for a 60 req/min limit, for example), and each request takes one token. If there are no tokens, the request waits.

This is the ideal baseline for a single worker that knows its rate limit:

class TokenBucket {

constructor(capacity, refillRatePerSecond) {

this.capacity = capacity;

this.tokens = capacity;

this.refillRate = refillRatePerSecond;

this.lastRefill = Date.now();

}

async acquire() {

while (true) {

this.refill();

if (this.tokens >= 1) {

this.tokens -= 1;

return;

}

const waitMs = Math.ceil((1 - this.tokens) * 1000 / this.refillRate);

await new Promise((r) => setTimeout(r, waitMs));

}

}

refill() {

const now = Date.now();

const elapsed = (now - this.lastRefill) / 1000;

this.tokens = Math.min(this.capacity, this.tokens + elapsed * this.refillRate);

this.lastRefill = now;

}

}

// Usage

const bucket = new TokenBucket(60, 1); // 60 capacity, 1 token per second

async function takeScreenshot(url) {

await bucket.acquire();

return fetch(`https://screenshotrun.com/api/v1/screenshots/capture?url=${url}`, {

headers: { Authorization: 'Bearer YOUR_API_KEY' },

});

}This works perfectly while you have one process. The moment you spin up multiple workers — each with its own bucket — the overall rate is no longer controlled. That's where the next approach kicks in — a queue with a hard cap on concurrent requests.

Queue with concurrency limit for batch processing

When you need to process a batch of URLs, it's better to think not in "tokens per second" but in "how many concurrent requests are allowed." p-limit is the most straightforward tool here:

import pLimit from 'p-limit';

const limit = pLimit(5); // up to 5 concurrent requests

async function takeScreenshot(url) {

return fetch(`https://screenshotrun.com/api/v1/screenshots/capture?url=${url}`, {

headers: { Authorization: 'Bearer YOUR_API_KEY' },

});

}

const urls = [/* 1000 URLs */];

const results = await Promise.all(

urls.map((url) => limit(() => takeScreenshot(url)))

);p-limit guarantees that no more than N requests are active at any given moment. This naturally adapts to a rate-limited API: if each request takes about 2 seconds on average, 5 concurrent requests give roughly 2.5 requests per second — a sustainable pace.

For a distributed setup with workers across multiple machines, you need a real queue. BullMQ with a Redis backend works well, but that's a bigger architectural decision, and for most projects with a single server, p-limit is enough. By the way, a smart way to reduce API load is to cache identical requests on the client side — I covered specific patterns for Redis and in-memory caches in my post on caching screenshots.

Retry-After header — the most underused strategy

This one gets overlooked more often than any other. When an API returns 429, the response usually includes a Retry-After header. That's a recommendation from the API on how long to wait before retrying. Most developers ignore it and jump straight into their own exponential backoff — that works, but it's suboptimal because it ignores the API's exact knowledge of current load.

The correct handling looks like this:

async function fetchWithRetryAfter(url, options, maxAttempts = 5) {

for (let attempt = 0; attempt < maxAttempts; attempt++) {

const response = await fetch(url, options);

if (response.status !== 429 && response.status !== 503) {

return response;

}

const retryAfter = response.headers.get('retry-after');

let waitMs;

if (retryAfter) {

// Header is either a number of seconds or an HTTP date

const parsed = parseInt(retryAfter, 10);

if (!isNaN(parsed)) {

waitMs = parsed * 1000;

} else {

waitMs = Math.max(0, new Date(retryAfter).getTime() - Date.now());

}

} else {

// Fallback to exponential backoff if the header is missing

waitMs = Math.min(30000, 1000 * Math.pow(2, attempt));

}

await new Promise((r) => setTimeout(r, waitMs));

}

throw new Error(`Failed after ${maxAttempts} attempts`);

}I handle both the numeric format (Retry-After: 60) and HTTP-date (Retry-After: Mon, 04 May 2026 12:00:00 GMT), because the HTTP spec allows both and different APIs use different formats. If the header isn't there at all, fall back to the exponential backoff from the next section.

Exponential backoff and why jitter matters

When Retry-After isn't returned, you need your own backoff. The standard formula is delay = min(cap, base * 2^attempt). But there's a critical detail that often gets missed: jitter.

Without jitter, every retrying client waits exactly the same amount of time and slams back into the API simultaneously. That creates a thundering herd — a synchronized retry wave that overloads the API again right after it recovers. The fix is to add randomness:

function exponentialBackoffWithJitter(attempt, baseMs = 1000, capMs = 30000) {

const exponential = Math.min(capMs, baseMs * Math.pow(2, attempt));

const jitter = Math.random() * exponential;

return jitter; // full jitter — the best variant for most cases

}

async function fetchWithBackoff(url, options, maxAttempts = 5) {

for (let attempt = 0; attempt < maxAttempts; attempt++) {

try {

const response = await fetch(url, options);

if (response.ok) return response;

if (response.status < 500 && response.status !== 429) {

return response; // 4xx (except 429) shouldn't be retried

}

} catch (err) {

if (attempt === maxAttempts - 1) throw err;

}

const waitMs = exponentialBackoffWithJitter(attempt);

await new Promise((r) => setTimeout(r, waitMs));

}

throw new Error(`Failed after ${maxAttempts} attempts`);

}I use "full jitter" — random * exponential, not exponential ± random. The AWS Architecture Blog showed in their experiments that full jitter gives the most even load distribution and recovers an under-pressure API the fastest.

One more note on the status code check — retrying 5xx and 429 makes sense, retrying other 4xx doesn't. If the API returns 401 (bad key) or 400 (bad params), retrying gives the exact same result and burns through your attempts. This connects to a related topic — which errors are actually safe to retry — that often comes up at the boundary with lifecycle issues in headless browsers. I have a separate post on Protocol error captureScreenshot where this comes up in the context of race conditions during retries.

Circuit breaker for distributed setups

This approach is for when an API is down not for a minute, but for five or ten. Without a circuit breaker, your client keeps hammering the API every 30 seconds (or whatever your backoff cap is), creating constant load on an already-broken service.

A circuit breaker has three states. Closed — normal operation, requests go through. Open — after a series of failures, the breaker "opens" and blocks requests, not even sending them to the API. Half-open — after a timeout, the breaker tries one request, and if it succeeds, returns to closed:

class CircuitBreaker {

private failures = 0;

private state: 'closed' | 'open' | 'half-open' = 'closed';

private nextAttemptAt = 0;

constructor(

private threshold = 5,

private resetTimeoutMs = 60000

) {}

async execute<T>(fn: () => Promise<T>): Promise<T> {

if (this.state === 'open') {

if (Date.now() < this.nextAttemptAt) {

throw new Error('Circuit breaker is open');

}

this.state = 'half-open';

}

try {

const result = await fn();

this.onSuccess();

return result;

} catch (err) {

this.onFailure();

throw err;

}

}

private onSuccess() {

this.failures = 0;

this.state = 'closed';

}

private onFailure() {

this.failures++;

if (this.failures >= this.threshold) {

this.state = 'open';

this.nextAttemptAt = Date.now() + this.resetTimeoutMs;

}

}

}

// Usage

const breaker = new CircuitBreaker(5, 60000);

async function takeScreenshot(url: string) {

return breaker.execute(() =>

fetch(`https://screenshotrun.com/api/v1/screenshots/capture?url=${url}`, {

headers: { Authorization: 'Bearer YOUR_API_KEY' },

})

);

}This is a minimal implementation. In production code, it makes sense to use ready libraries like opossum for Node.js — they give you metrics, sliding window support instead of a simple failure counter, and integration with monitoring systems. But the core logic is exactly this.

What not to do

A few common mistakes that break rate limiting at the start of a project.

Retrying any 4xx error. 400, 401, 403 are problems with your request or auth. A retry won't fix anything, just burn attempts. The single exception is 429 — that one needs to be retried.

Retrying without backoff. Tight loop scenario: first request fails → another one immediately → fails again → another one. That creates a DDoS on an already-overloaded API.

Ignoring the Retry-After header. The API knows its load better than you do, and its recommendation is always more accurate than your own backoff cap.

Setting infinite retry without max_attempts. At some point you have to admit the request isn't going through and move to failure handling. Otherwise clogged-up workers eat resources.

Not distinguishing transient and permanent errors. 429 and 503 are transient (a retry might help), 401 and 400 are permanent (a retry won't help). This is the first thing your retry logic should sort out.

If you're a screenshotrun API consumer

Like any rate-limited API, screenshotrun has its own limits — they're tied to your plan. The free plan gets you 5 requests per minute and 200 screenshots per month, Starter — 15/min and 3,000/month, Pro — 40/min and 15,000/month, Business — 100/min and 50,000/month.

The approaches above apply directly: token bucket for pacing, p-limit for batch processing, Retry-After for proper 429 handling. On the API side, I return standard codes (429 when you exceed your rate limit) with a properly populated Retry-After header, plus extra X-RateLimit-Limit, X-RateLimit-Remaining, and X-RateLimit-Reset for anyone who wants to see the full quota state. The standard implementation from this article works without modifications.

If you're curious about when it makes sense to run your own Puppeteer server with custom rate-limiting infrastructure versus using a hosted API, I have a post on build vs buy covering that. And the basic API call template through curl — for the very first tests of your rate limit logic — is in my separate post on curl and screenshots.

By the way, if you're taking screenshots of sites in Docker and running into rate limits from the target sites themselves (Cloudflare blocking, for example), that's a different story altogether — not about API rate limiting. I covered it in my separate post on ERR_CONNECTION_REFUSED in Docker — it has the causes and fixes for blocks on the target site side.

Rate limiting isn't "wire up backoff and forget." It's an architectural decision with two sides: controlling outbound traffic and handling refusals. If both are designed in from the start, a production client stays stable even under significant API load spikes. Most guides only cover the reactive half, which is exactly why so many production clients constantly hit limits in the first place.

Vitalii Holben

Vitalii Holben