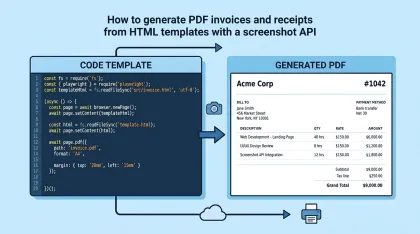

Wait for page to fully load before screenshot: 4 strategies

I took a screenshot of a public dashboard and got back an image with empty tables. Here are four strategies to wait for a page to fully load, with a real Puppeteer walkthrough.

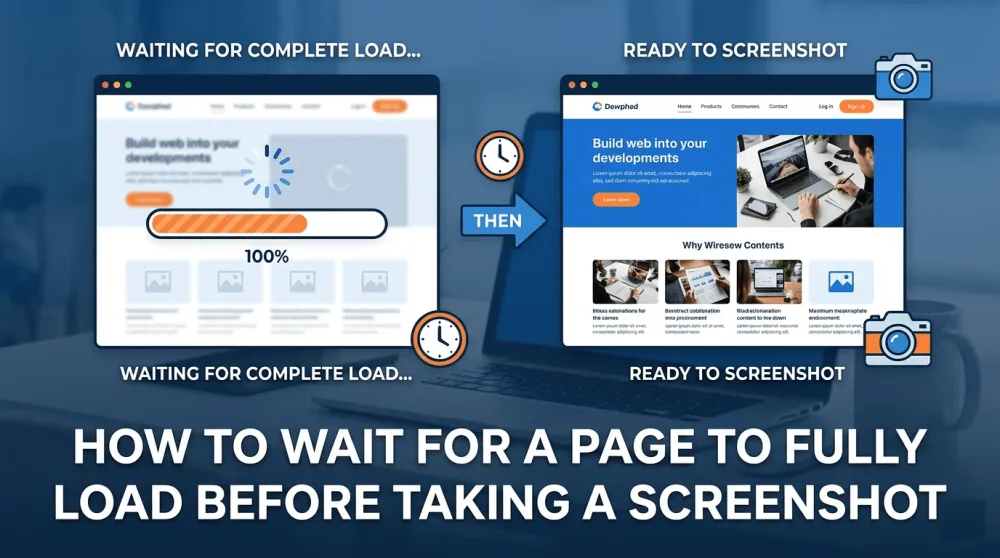

A while ago I was taking a screenshot of a public analytics dashboard — a standard React SPA with a chart and a few tables that pull their data over fetch. My code was as simple as it gets: open the page with Puppeteer, wait for the load event, save a PNG. I ran it, and got back an image where the header was there, the navigation was there, the chart was there, but the tables at the bottom were completely empty. It looked like someone had cropped the screenshot halfway through the render.

I switched the wait strategy to network idle and the tables showed up. One line changed, problem solved. But that was the kind of lucky outcome that hides the real complexity underneath: "loaded" isn't a binary state you can describe with one event. There are at least four distinct states, and they happen at different times. On a different site that same one-line change wouldn't have been enough, and I'd have had to go deeper — into selectors, into content checks, into edge cases with canvas elements and infinite feeds.

In this article I'll walk through all four "ready" states, show four wait strategies, run a real walkthrough on Plausible Analytics' public dashboard, cover the pitfalls I hit when picking selectors (including one I almost stepped into), and give you a reference table for Puppeteer, Playwright, and Selenium. The last section covers edge cases that break even a correctly-tuned wait.

What "page loaded" actually means

When developers say "wait for the page to load," they usually mean one of four very different states. Here they are in the order they happen:

State | What just happened | Event / signal | When it's enough |

|---|---|---|---|

DOM ready | HTML parsed, scripts started running |

| static pages, landing pages with no JS logic |

Load | All images, fonts, and initial iframes finished loading |

| simple sites without async content |

Network idle | No network requests for N milliseconds |

| SPAs, dashboards, async charts |

Visually stable | The specific element you care about rendered and settled | custom selector or delay | lazy loading, animations, canvas |

The difference between these matters more than it looks. In practice, it's the difference between a screenshot of an empty page skeleton and a screenshot of a real dashboard with data in it. On modern frontends the load event fires earlier than the browser has anything useful to show — right after load the page typically kicks off dozens of fetch calls for data, fonts, lazy components, and analytics, and only then starts rendering real content.

The takeaway I want you to internalize: picking a wait strategy isn't a tool-configuration question. It's a question of understanding when the page you're screenshotting is actually ready for what you want from it. And that depends on how the page is built.

Four wait strategies

I'll go through each strategy the same way: what it does, when to use it, when to skip it, and a short Puppeteer example. Playwright and Selenium work on the same principles with different syntax — there's a reference table further down.

Strategy 1. Wait for the load event

This is the default in almost every headless tool. The page is considered ready once the browser finishes parsing HTML, loads images, fonts, and the iframes that were in the original markup, and fires the load event.

await page.goto('https://example.com', { waitUntil: 'load' });

await page.screenshot({ path: 'shot.png' });When to use it: a static blog, a simple landing page, documentation without dynamic components. If the site doesn't fire off requests after the initial render, this is enough.

When to skip it: any page that pulls data via fetch or XHR after the initial render. Which, honestly, is most modern sites. Dashboards, social feeds, e-commerce, admin panels — they all show a skeleton first, then hydrate, then fetch data. The load event fires somewhere around step one of three, and that's why screenshots end up half-empty.

Strategy 2. Wait for network to go quiet

A more reliable approach is to wait until the page stops making new requests. Puppeteer exposes two values on waitUntil: networkidle0 means "zero active requests for 500ms," and networkidle2 means "no more than two active requests for 500ms."

await page.goto('https://example.com', { waitUntil: 'networkidle2' });

await page.screenshot({ path: 'shot.png' });The difference between the two looks small and isn't. If the site has a chat widget, a live feed, analytics, or any WebSocket that sends periodic pings, networkidle0 will never fire and the screenshot will time out. networkidle2 handles those cases because it tolerates two "background" requests running in parallel.

When to use it: SPAs with async data loading, dashboards with fetch-driven charts, e-commerce pages with lazy-loaded recommendations.

When to skip it: sites with constant background traffic — social feeds that poll for updates every couple of seconds via long-polling, for example. In those cases you need strategy three.

Strategy 3. Wait for a specific selector

The most precise and predictable strategy is to tell the browser: "I know the page is ready when this element appears." It's a contract between your code and the page that doesn't depend on network activity or arbitrary timeouts.

await page.goto('https://example.com');

await page.waitForSelector('.chart-rendered', { timeout: 10000 });

await page.screenshot({ path: 'shot.png' });I'm waiting for a class called .chart-rendered here, which (hypothetically) gets attached to the element once the charting library finishes drawing. In practice, the selector needs to fit the actual site — it might be a "ready" class, an attribute like data-loaded="true", or a specific text node like .total-visitors that holds a real number instead of a dash. There's a separate section below on how to pick selectors on an unfamiliar site and what pitfalls to watch for — specifically, canvas-based charts and div layouts disguised as tables.

When to use it: when you control the target page (your own app) or know its structure well enough to reliably identify a "ready" selector.

When to skip it: when you're screenshotting arbitrary URLs and have no idea what's under the hood. For those cases, there's no universal selector, and you have to combine network idle with a fixed delay.

Strategy 4. Fixed delay

The last resort is to just wait N milliseconds. It's a hack, I'll say that plainly, but sometimes it's the only option that works.

await page.goto('https://example.com', { waitUntil: 'networkidle2' });

await new Promise(resolve => setTimeout(resolve, 2000));

await page.screenshot({ path: 'shot.png' });Two problems with fixed delays worth knowing about. First, they're slow: even if the page is ready in 300ms, the screenshot will wait the full 2000. Over a thousand screenshots that's hours of dead time. Second, they're fragile: on a slow CI runner or a bad connection, 2 seconds might not be enough, and the result breaks again.

When to use it: canvas animations with no clear final state, WebGL scenes, complex CSS animations, iframes with third-party content where you can't modify the structure.

When to skip it: anywhere you can get away with a selector or network idle instead. A fixed delay is an admission that you couldn't find a precise readiness signal, not a first-choice strategy.

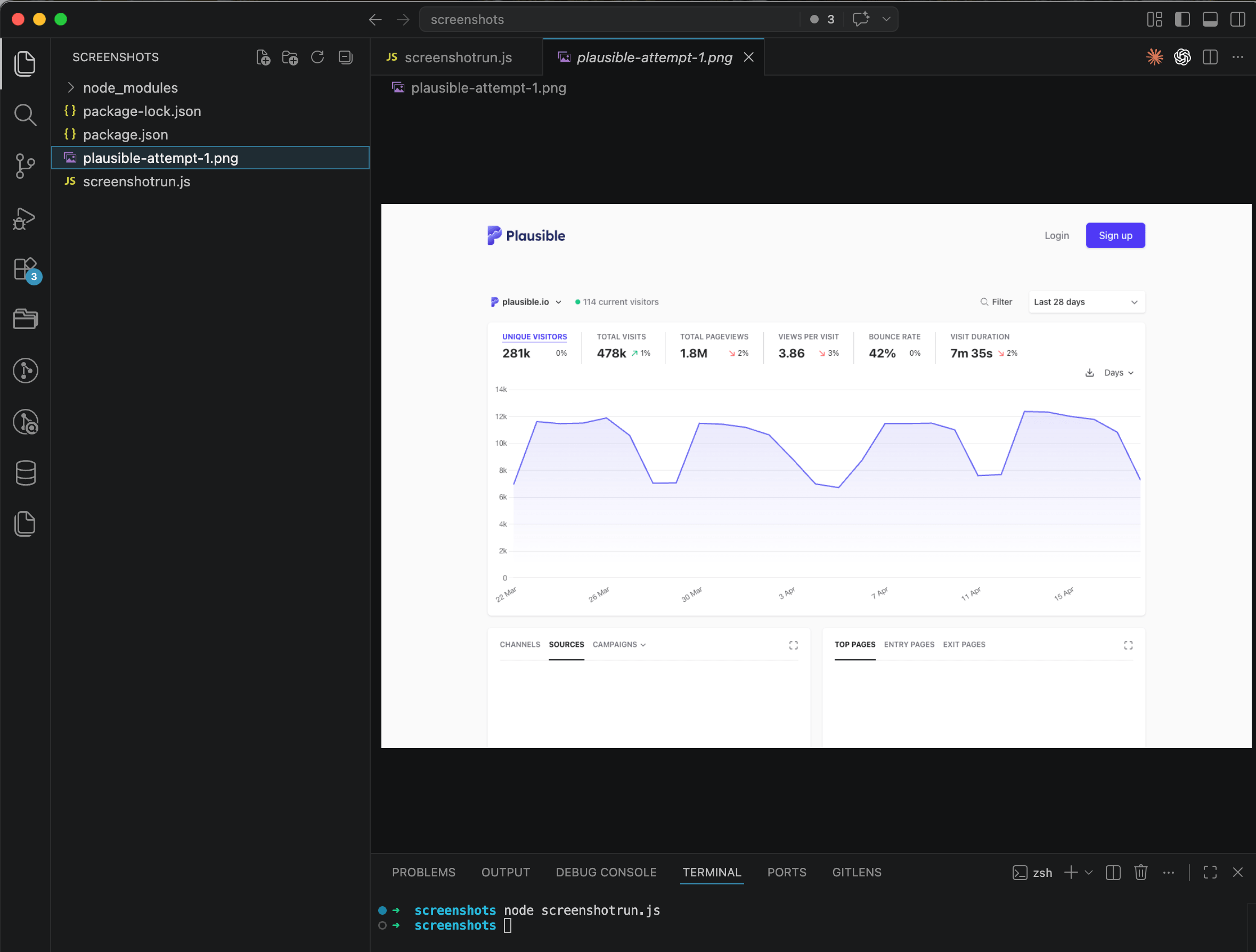

In practice: screenshotting the Plausible Analytics dashboard

Now let me show how these strategies play out on a real site. Plausible Analytics made their own stats public — the page at plausible.io/plausible.io is a live React dashboard with a visitors chart and two data tables (Sources and Top Pages). Typical SPA with async data loading, exactly the case where the naive approach falls apart.

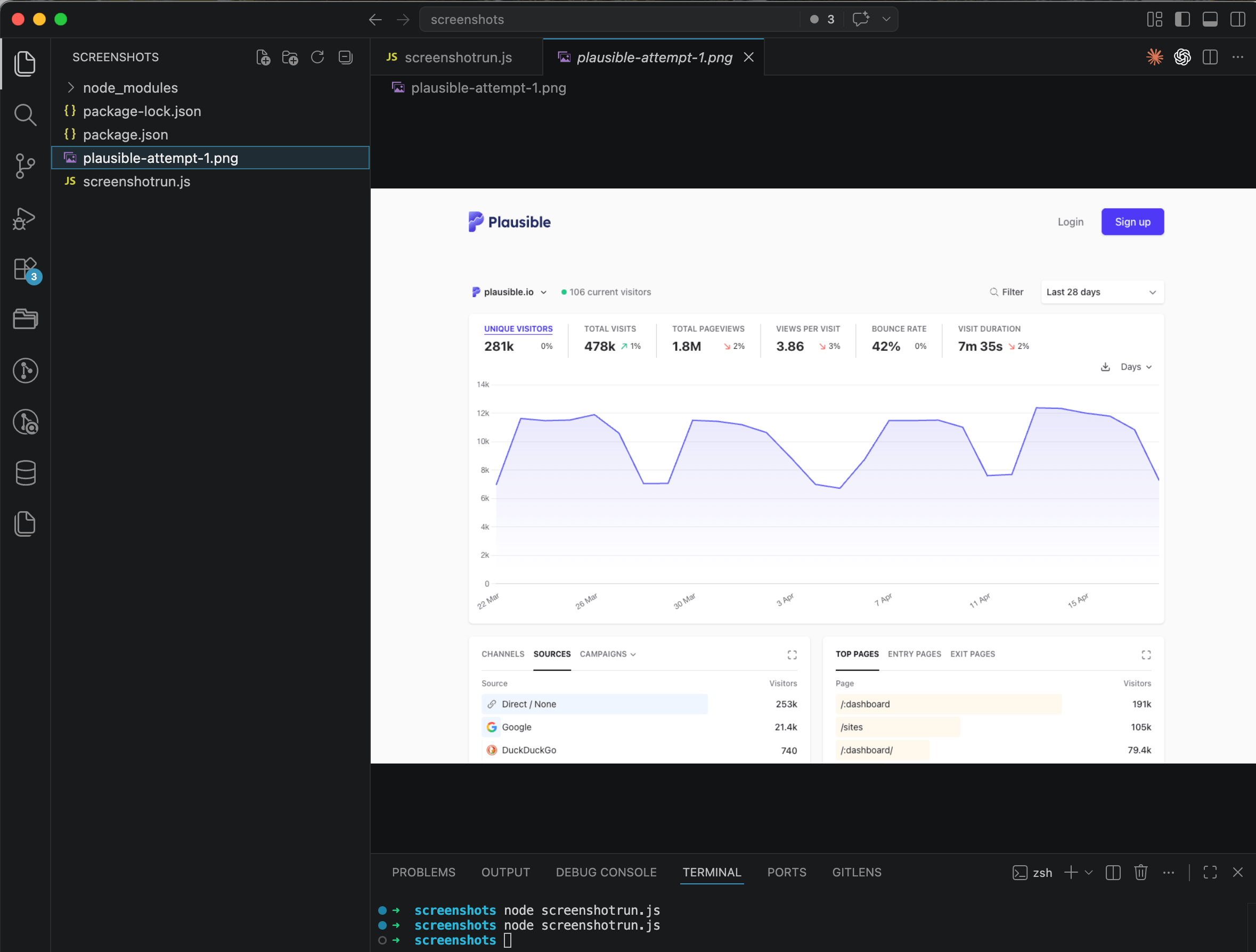

Attempt one: naive load

I started with the simplest possible code, which is what most people write on their first try:

const puppeteer = require('puppeteer');

(async () => {

const browser = await puppeteer.launch();

const page = await browser.newPage();

await page.setViewport({ width: 1440, height: 900 });

await page.goto('https://plausible.io/plausible.io', {

waitUntil: 'load'

});

await page.screenshot({ path: 'plausible-attempt-1.png', fullPage: true });

await browser.close();

})();

The header, the metrics at the top, the visitors chart — all there. But the Sources and Top Pages blocks at the bottom only show their column headers, no rows. The browser thinks the page is loaded, but the data for those tables is still on its way.

Attempt two: networkidle2

I changed waitUntil to networkidle2 so Puppeteer would wait for the fetch calls to finish:

await page.goto('https://plausible.io/plausible.io', {

waitUntil: 'networkidle2',

timeout: 30000

});

await page.screenshot({ path: 'plausible-attempt-2.png', fullPage: true });

This time everything's there — the chart, the metrics, both tables with real data, even the map and browsers blocks further down. This is a screenshot I can actually ship.

For Plausible, that's enough. Swapping waitUntil: 'load' for waitUntil: 'networkidle2' was the whole fix, and stacking more waits on top would just be overengineering.

But here's the thing: when I was staring at those empty tables in the first attempt, my instinct wasn't "let me try network idle." It was "let me wait for the chart with a selector — the chart is the main thing on this page." And that instinct almost sent me half an hour down the wrong road. On a different site, where network idle won't solve the problem in one shot, that wrong road becomes a real issue. So it's worth unpacking.

How to pick a selector when network idle isn't enough

Network idle worked on Plausible, but it won't always. Sites with constant background traffic (chat widgets, long-polling, live feeds), pages with heavy client-side hydration, canvas animations that settle into a final state — all of those are cases where you can't rely on the network going quiet, and you have to find a concrete readiness signal in the DOM. That's where two pitfalls show up, both of which I managed to hit on Plausible itself even though the selector turned out to be unnecessary.

Pitfall one: canvas, not SVG

Seeing the empty tables in the first attempt, my first thought was "I'll wait for the chart as a final marker." I opened DevTools, found a container with a .main-graph class, assumed there was an SVG path inside it for the chart line (it's a smooth curve, it has to be a <path>, right?), and wrote:

await page.waitForSelector('.main-graph svg path', { timeout: 10000 });I mentally ran it and stopped myself, because I went to double-check the actual markup first. Here's what I found:

<canvas id="main-graph-canvas" class="select-none cursor-pointer"

width="2144" height="736"></canvas>No SVG anywhere. Plausible's chart is drawn inside a <canvas> — one big bitmap, with no <path>, <rect>, or any DOM elements inside, just pixels. If I'd actually run that code, I would've gotten a TimeoutError after ten seconds: the selector was hunting for something that doesn't exist in the markup.

The general lesson: a canvas is a black box to the DOM. You can write a selector for the canvas element itself (canvas#main-graph-canvas), but nothing inside it is reachable with CSS selectors. If you need to wait for a canvas chart to be ready, you have to anchor on neighboring elements: a heading with a number, a legend, a spinner (vanished means ready), or a sibling table that gets populated after the chart.

How to check in five seconds which one you're dealing with: open DevTools, find the chart, look at the tag. If it's an <svg> with nested elements, great — write a selector on the part you care about. If it's a <canvas> with no children, forget about targeting anything inside it.

Pitfall two: div layouts instead of tables

Say you got past the chart and decided to wait for the Sources table to fill up (it fills last, which makes it a good readiness marker). First instinct: await page.waitForSelector('#sources tbody tr'). It's a table, that's how tables work.

Opening DevTools shows something different:

<div data-testid="report-sources" class="relative min-h-[430px] ...">

<div class="w-full flex justify-between border-b ...">...</div>

<div>

<div class="w-full" style="min-height: 356px;">

<div class="group/report" style="min-height: 320px;">

<!-- the source rows live here -->

</div>

</div>

</div>

</div>No <table>, no <tbody>, no <tr>. Plausible builds its "table" out of divs with a Tailwind grid — visually it looks like a table, structurally it's nested flex containers. A selector like #sources tbody tr would've timed out the same way the SVG one would've.

The general lesson: don't confuse the visual metaphor with the markup. What looks like a table might be anything under the hood. On modern SPAs built with Tailwind or styled-components, semantic tags often get replaced with <div> plus a stack of classes, and writing selectors based on assumptions about structure is a reliable way to earn a timeout. Always open the Elements panel and look at the actual tag.

How to actually wait for content

Let's say network idle didn't cover you and you need to wait for the block's content specifically. The data-testid="report-sources" attribute is a great hook here — Plausible's engineers clearly left it for their own e2e tests, which means it's stable across releases. But there's a catch: the container element with that attribute shows up in the DOM before the data loads, with an empty skeleton inside. A plain waitForSelector('[data-testid="report-sources"]') would fire too early.

When you need to wait for content inside an element, not just the element itself, Puppeteer has waitForFunction:

await page.waitForFunction(

() => {

const container = document.querySelector('[data-testid="report-sources"]');

return container && container.textContent.includes('Direct');

},

{ timeout: 10000 }

);The logic: every ~250ms, Puppeteer runs the function inside the page context and waits for it to return true. I find the Sources container and check whether its text now includes the word "Direct" — the first traffic source on Plausible's dashboard. Once the data renders, the condition flips true and Puppeteer moves on.

This is a more powerful tool than waitForSelector, and it's worth having in your toolkit for cases like:

The container exists in the DOM immediately, but its contents are a skeleton until data arrives.

The readiness signal isn't "element exists" but "value matches" — a real number instead of a dash, a

loadedclass appearing, an attribute flipping.You need multiple conditions at once ("chart rendered AND table populated").

On Plausible, as I said, networkidle2 is enough without any of this. But waitForFunction is the tool you reach for on sites where you don't get that lucky.

Parameter reference across tools

Here's the same behavior across the three popular tools, in one place you can bookmark.

Tool | Load event | Network idle | Selector | Delay |

|---|---|---|---|---|

Puppeteer |

|

|

|

|

Playwright |

|

|

|

|

Selenium | fires by default | not available out of the box |

|

|

One thing worth calling out: Selenium doesn't have network idle at the API level. Historically this is because WebDriver was designed for functional testing, not screenshots. If you're on Selenium and need to wait for network quiet, you're stuck polling performance.getEntriesByType('resource') through execute_script and checking whether the list has stopped growing — doable, but much less convenient than the one-liner in Puppeteer or Playwright. It's a big reason modern screenshot and visual-testing tools moved to the Chromium and Firefox devtools protocols instead.

Edge cases that break even a correct wait

There are a few cases where the four strategies above aren't enough, and you have to work around them.

WebGL and animated canvas

A canvas chart that settles into a final still state is the easy case: wait for a neighboring table or legend and you're fine. WebGL and continuously-animated canvases are harder — there's no static markup inside at all, the scene lives on the GPU, and it never really "finishes" rendering in the traditional sense. If you need a guaranteed screenshot of a WebGL scene in a specific state, your options are: a fixed delay after the first frame, a requestAnimationFrame hook via page.evaluate if you control the page, or reaching directly into canvas.toDataURL() instead of taking a Puppeteer screenshot.

Infinite feeds

Twitter, LinkedIn, Instagram — pages that never really finish loading because they wait for you to scroll. Network idle is never going to fire here, there's no point waiting for it. The fix is to screenshot the visible viewport after the first batch of content arrives instead of capturing the full page, and to scroll programmatically if you need more.

Lazy-loaded images below the fold

In a full-page screenshot, the browser renders the whole page at once, but images with loading="lazy" only start downloading when they enter the viewport — which in headless mode may never happen. I covered this one in detail in a separate post on full-page screenshots and lazy loading, with the diagnostics and a couple of workarounds (scroll through before capturing, or strip the lazy attributes entirely).

Cookie banners and modals

They show up right when the page is ready to be screenshotted, and they ruin the composition. That's a whole topic of its own — if you're running into it, there's a walkthrough on hiding cookie banners, ads, and chat widgets in screenshots.

When you'd rather not maintain this yourself

Everything above is code you have to keep alive: updating Puppeteer, chasing Chromium security patches, dealing with flakes in CI, handling timeouts on slow pages, managing browser pools. If screenshots are central to what you're building, running your own Chromium fleet makes sense and you'll develop real expertise. If screenshots are a side task (generating thumbnails for a directory, sending reports to clients, producing OG images), all that plumbing eats into time you'd rather spend on your actual product.

In my own Screenshot API at screenshotrun.com, the four strategies above collapse into query parameters: wait_until=networkidle handles the network-quiet case, wait_for_selector=[data-testid="report-sources"] waits for a specific element, delay=500 tacks on a final pause for animations. Taking a screenshot of the same Plausible dashboard becomes a single HTTP call:

curl "https://screenshotrun.com/api/v1/screenshots/capture?url=https://plausible.io/plausible.io&wait_until=networkidle&delay=1000&full_page=true&response_type=image" \

-H "Authorization: Bearer YOUR_TOKEN" \

-o dashboard.pngWhether that's worth it depends on your volume and priorities. If you're taking 50 screenshots a day for a side project, Puppeteer on your own box works fine and gives you full control. If you're running thousands per day and need uptime without managing a Chromium fleet, an API is easier. I went through that tradeoff in more detail in a separate post on build vs buy for screenshot APIs, including rough cost math and decision criteria.

The one thing to take away

"Loaded" isn't a single moment — it's four of them: DOM ready, load event fired, network quiet, the specific element you care about present. Static pages only need the second one. Most SPAs need the third, like Plausible, where swapping waitUntil: 'load' for waitUntil: 'networkidle2' fixed everything. Sites with constant background traffic, canvas charts without a final state, or heavy client-side hydration need the fourth — an explicit wait for an element or content via waitForSelector or waitForFunction.

And from the Plausible experience specifically: before you write a selector, open DevTools and check what you're actually looking at. What looks like an SVG chart might be a canvas. What looks like a table might be divs with a grid layout. Ten seconds of inspection saves you ten seconds of timeout on every run — and, more importantly, saves you from being confidently wrong about markup that doesn't exist.

If you're running into adjacent problems, there are deeper dives on the blog: lazy-loaded images, hiding cookie banners, and screenshots for visual regression testing in CI/CD. They all stack with what I covered here.

Vitalii Holben

Vitalii Holben